The telecommunications industry has reached a critical tipping point. Traditional, on-premises-heavy data center models are struggling under the weight of escalating infrastructure costs and an under utilization due to availability and compliance requirements. But the AI era demands exponential scale and beyond-nines reliability. The question for operators is no longer if they should modernize, but which architectural path will help them do that fastest.

Modernization isn’t a “rip and replace” event; it’s a strategic choice. Today, we’re showcasing how Google Kubernetes Engine (GKE) can serve as a high-performance foundation for two versatile deployment strategies: cloud-centric evolution and strategic hybrid modernization.

The two paths to network modernization

Every operator has a unique appetite for risk, regulatory landscape, and investment base, with some prioritizing agility, and others emphasizing the need for local control. You can use GKE to support both approaches:

1. Cloud- centric modernization: Agility at scale

This path is for operators looking to fully harness the cloud’s elasticity. Whether you’re migrating your own containerized network functions (CNFs) or building a cloud-native service like Ericsson-on-Demand, the goal is the same: move the heavy lifting to Google Cloud.

The benefit: By running mission-critical workloads like Voice Core or Policy Control Functions on Google’s global fiber backbone, operators can scale instantly for peak events and move toward “zero-human-touch” operations.

The economics: Transition from heavy upfront CAPEX to a “pay-as-you-grow” model. You no longer need to over-provision hardware that sits idle; the cloud absorbs the bursts for you.

Time to market: Accelerate time to market for new services like fixed wireless access, IoT and private 5G.

2. Strategic hybrid modernization: Cloud agility, local control

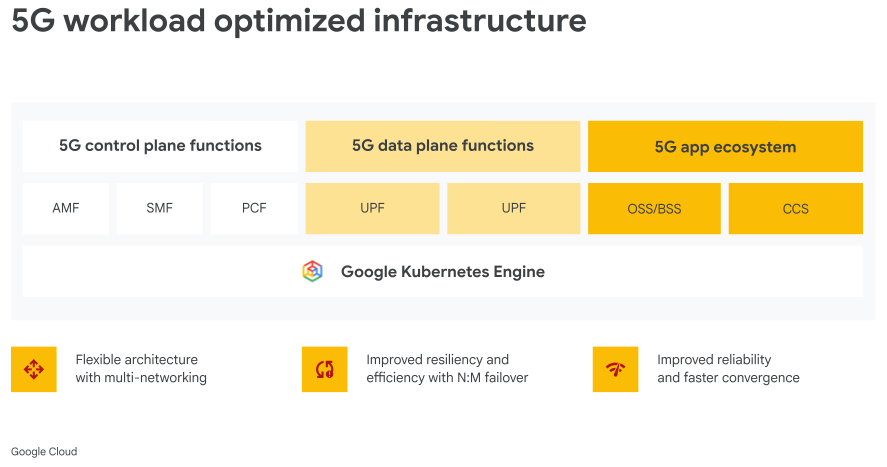

For many telcos, a hybrid approach offers a better balance. Here, operators can selectively move agile control plane components and data analytics to the cloud while keeping latency-sensitive user-plane functions on premises or at the edge.

The benefit: Optimize for ultra-low latency and meet strict data sovereignty requirements by keeping data plane traffic local, while still gaining the AI-driven insights and orchestration power of the cloud.

The versatility: Using GKE, you can run your control plane workloads in the cloud and data plane services directly in your own data centers or at the network edge, enjoying a unified operational model across your environments.

Engineering the “telco-grade” foundation

Today, we are proud to showcase how GKE has evolved into the industry’s most specialized platform for containerized network functions (CNFs), backed by massive momentum from operators and equipment vendor partners.

It’s achieved this thanks to a variety of capabilities.

Connectivity and isolation

Standard Kubernetes wasn’t designed for the complex traffic separation that telcos require. GKE bridges this gap with:

Multi-networking API: A native Kubernetes way to manage multiple interfaces per Pod, bringing standard Network Policies to every interface.

Simulated L2 networking: A “migration superpower” that allows legacy applications to maintain their Layer-2 operational model while running on a modern cloud-native stack.

The telco CNI: Support for Multus, IPvlan, and Whereabouts on specialized Ubuntu images. This allows operators to isolate management, control, and user planes with surgical precision.

Persistent reachability

In a world of ephemeral containers, telco functions need stability. GKE enables this through:

GKE IP route: We’ve integrated equal-cost multi-path (ECMP)-like functionality directly into the GKE dataplane. If a workload fails, it is automatically and rapidly removed from the service path, providing high availability without complex external router configurations.

Persistent IP: GKE provides the static IP support that 5G core functions require for consistent reachability across their lifecycle without NAT that isn’t available on standard Kubernetes.

Sub-second convergence

For telcos, every millisecond of downtime is a lost connection. GKE’s dataplane via HA Policy is optimized for near-zero downtime with ultra-fast failure detection and convergence, offering operators the choice between self-managed recovery or fully Google-managed failure detection.

Shifting from “saving” to “solving” with AI

For operators, the ultimate goal of modernization is to transition to an autonomous network. By running the core network functions on a platform adjacent to Google Cloud AI and data platforms such as Vertex AI and BigQuery, they can turn telemetry into actionable changes to optimize the network. Some use cases and benefits that modernization enables include:

Predictive AIOps: Use AI to identify performance degradation and trigger automated healing before a call ever drops. Use the cloud for on-demand burst capacity during sporting events or service launches. Or use the data from your GKE-hosted 5G core to fuel AI-powered automation that anticipates issues before they impact subscribers.

Intent-driven programmability: Shift from expensive, reactive operations and cut down new deployment setup times from several weeks to a couple of hours.

Monetize insights: Leverage AI on cloud-native data to identify and capture entirely new revenue opportunities in addition to rightsizing your networks.

Your journey, your terms

The future of telco is intelligent, resilient, and incredibly flexible. Whether you are taking your first step into a hybrid deployment or launching a fully cloud-hosted core, Google Cloud is your strategic partner.

Join us at MWC: Visit booth #2H40 in Hall 2 to see these solutions in action, including live demonstrations of mobile core running on GKE.

Read more here: https://cloud.google.com/blog/products/networking/gke-for-telco-building-a-highly-resilient-ai-native-core/